PDigit's AI PORTFOLIO

Blending AI efficiency with human interpretation

AI Agents and RAG

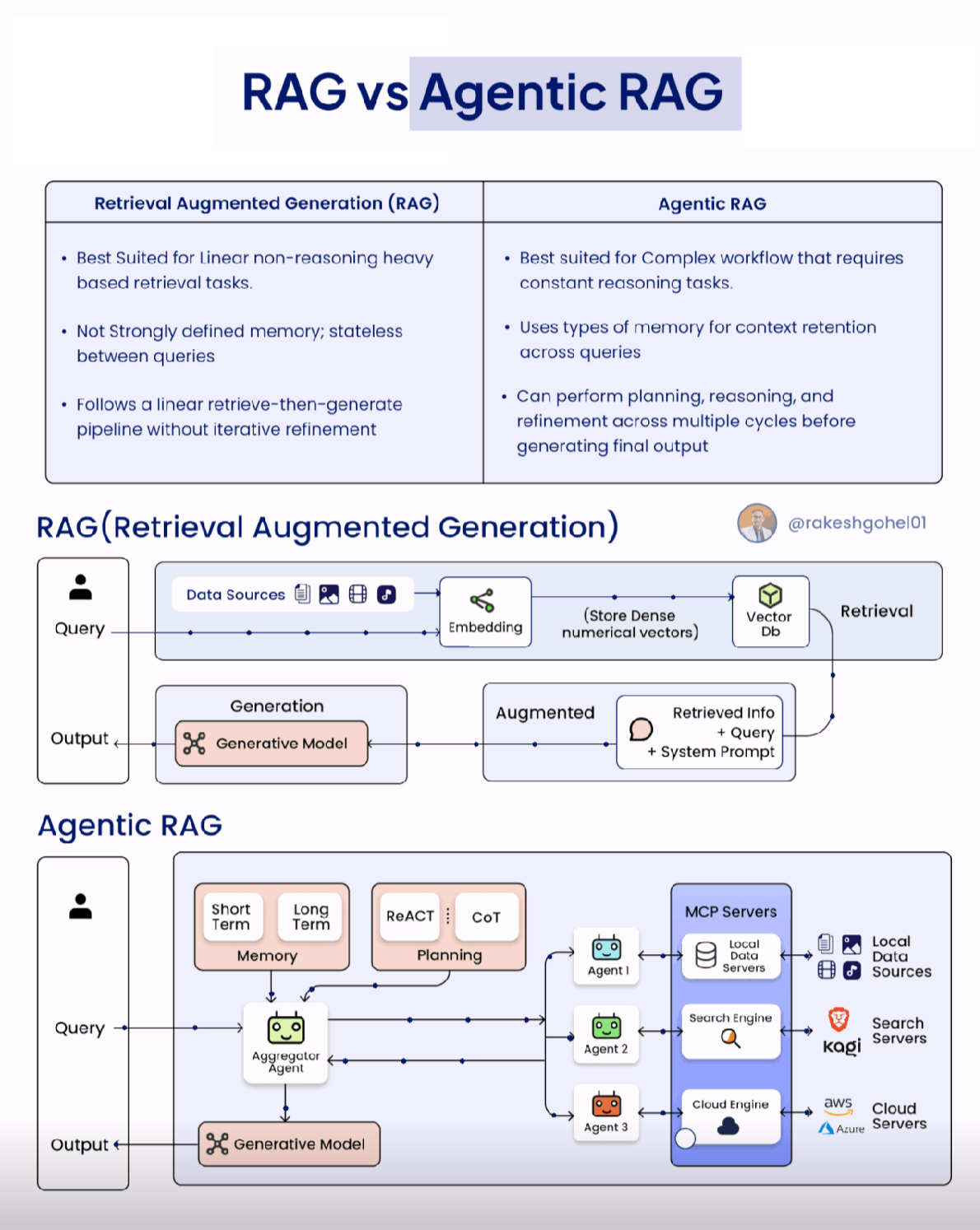

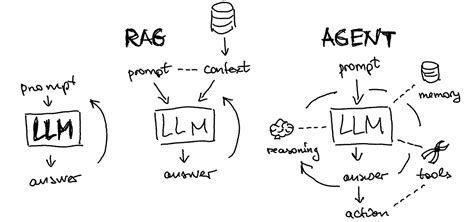

I and my team have been actively following and implementing the latest advancements in AI Agents and Retrieval-Augmented Generation (RAG) systems. My expertise includes designing and deploying autonomous agents for various tasks such as intelligent automation, personalized recommendations, and proactive problem solving. For RAG, I specialize in building robust pipelines that enhance large language models with up-to-date and domain-specific knowledge, improving accuracy and reducing hallucinations. This includes experience with various RAG techniques like advanced retrieval strategies, knowledge fusion, and response generation optimization.

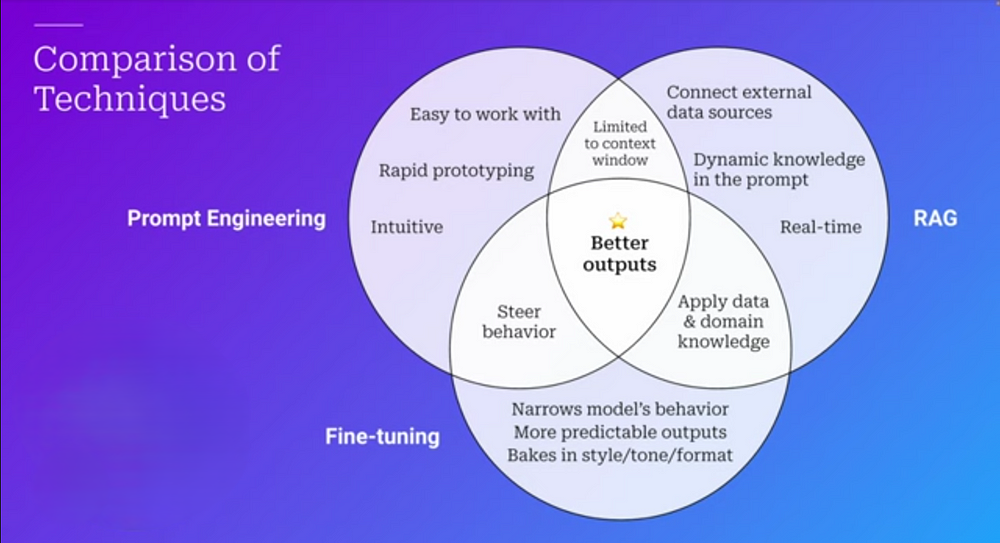

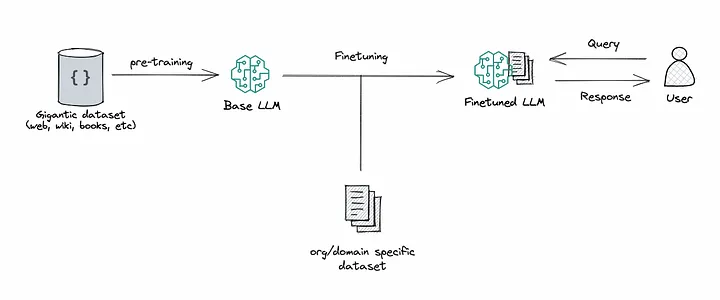

RAG Vs Fine-Tuning

Both RAG and fine-tuning serve as powerful tools in enhancing the performance of LLM-based applications.

There are a lot of awesome resources out there that even get quite technical about these ‘new’ trends in LLM. For the scope of this article, we just want to mention these arising techniques.

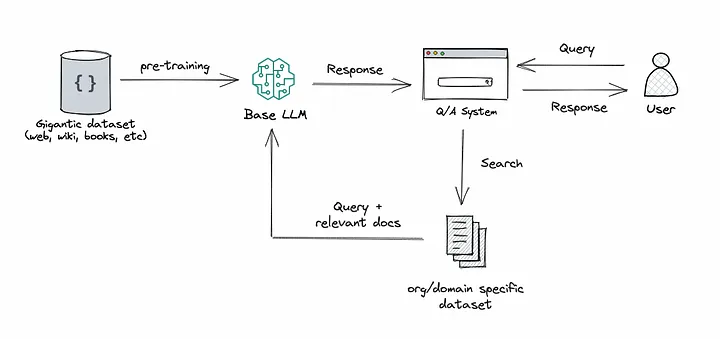

RAG (Retrieval Augmented Generation) approach integrates the power of retrieval (or searching) into LLM text generation. It combines a retriever system, which fetches relevant document snippets from a large corpus, and an LLM, which produces answers using the information from those snippets. In essence, RAG helps the model to “look up” external information to improve its responses.

Finetuning: This is the process of taking a pre-trained LLM and further training it on a smaller, specific dataset to adapt it for a particular task or to improve its performance. By finetuning, we are adjusting the model’s weights based on our data, making it more tailored to our application’s unique needs.

Which is better?

Obviously both… depends on the specific app, and often a mixed approach is used.

For more information and tech details, see also (external link): RAG vs Finetuning: Which is the Best Tool to Boost Your LLM Application?

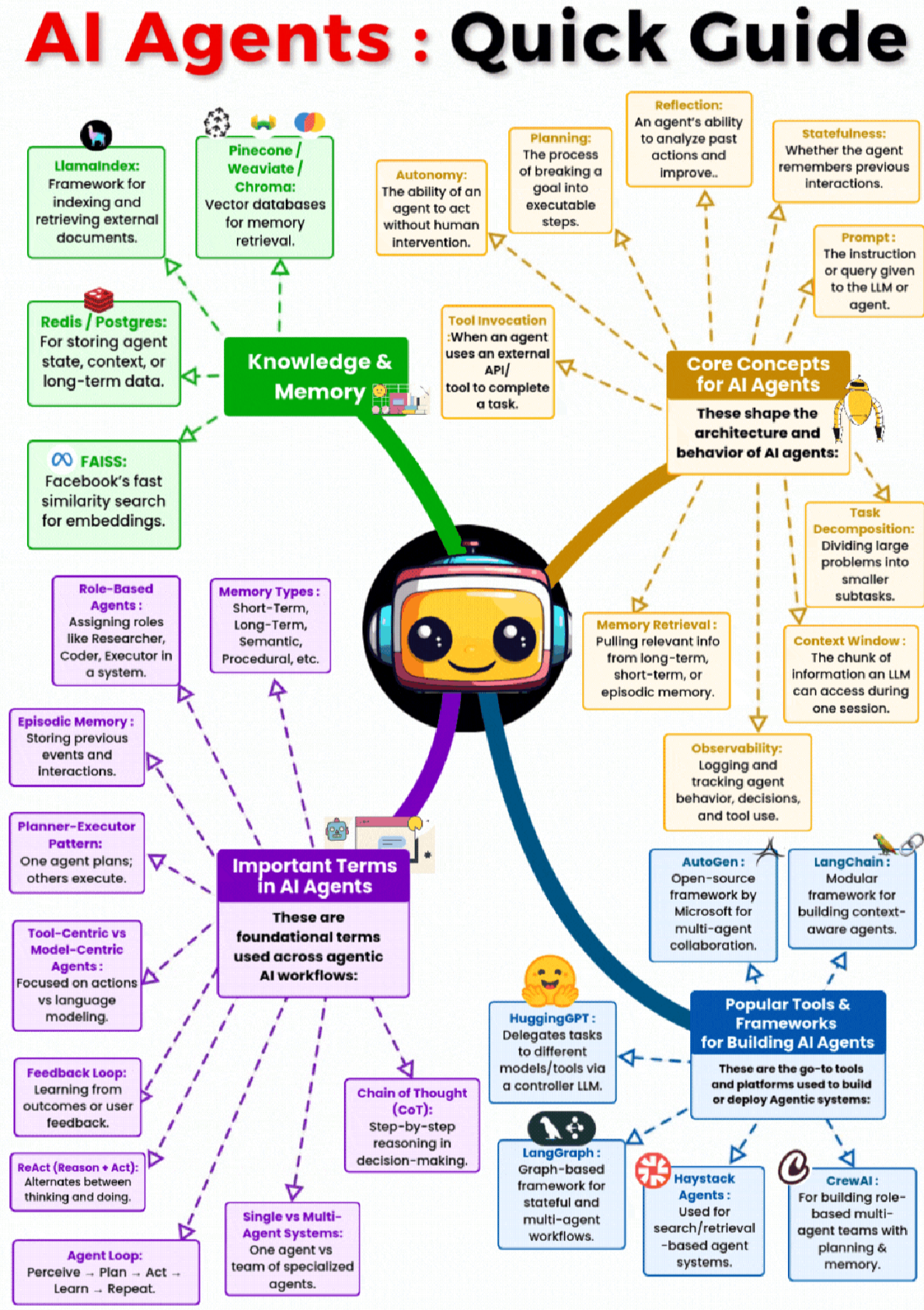

Here are some of the leading AI Agent frameworks and RAG tools/frameworks I have experience with:

AI Agent Frameworks:

- LangChain: A comprehensive

framework for building applications powered by large

language models, including powerful agent

capabilities

- AutoGen (Microsoft): Enables

building next-generation large language model

applications based on multi-agent conversations

- CrewAI: Orchestrating role-playing,

autonomous AI agents

- Semantic Kernel (Microsoft): An extensible and open-source SDK that allows you to easily build agents that can reason, plan, and act.

RAG Tools and Frameworks:

- LlamaIndex (GPT Index): A data

framework for building LLM applications over external

data, offering robust RAG capabilities

- LangChain: As mentioned above, it

also provides excellent modules for building RAG

pipelines

- Vector Databases (e.g., Pinecone, ChromaDB,

Weaviate, FAISS): Essential for efficient

retrieval of relevant information for RAG

- Sentence Transformers: For creating high-quality embeddings of text data used in RAG systems

The best choice of framework or tool often depends on the specific requirements of the project, including complexity, scale, and integration needs. I can help you evaluate these options and choose the most suitable technology stack for your AI Agent and RAG initiatives

AI Agents Quick Guide

The current new (2025) Frontier: designs systems where LLMs are just one (powerful) piece of the puzzle

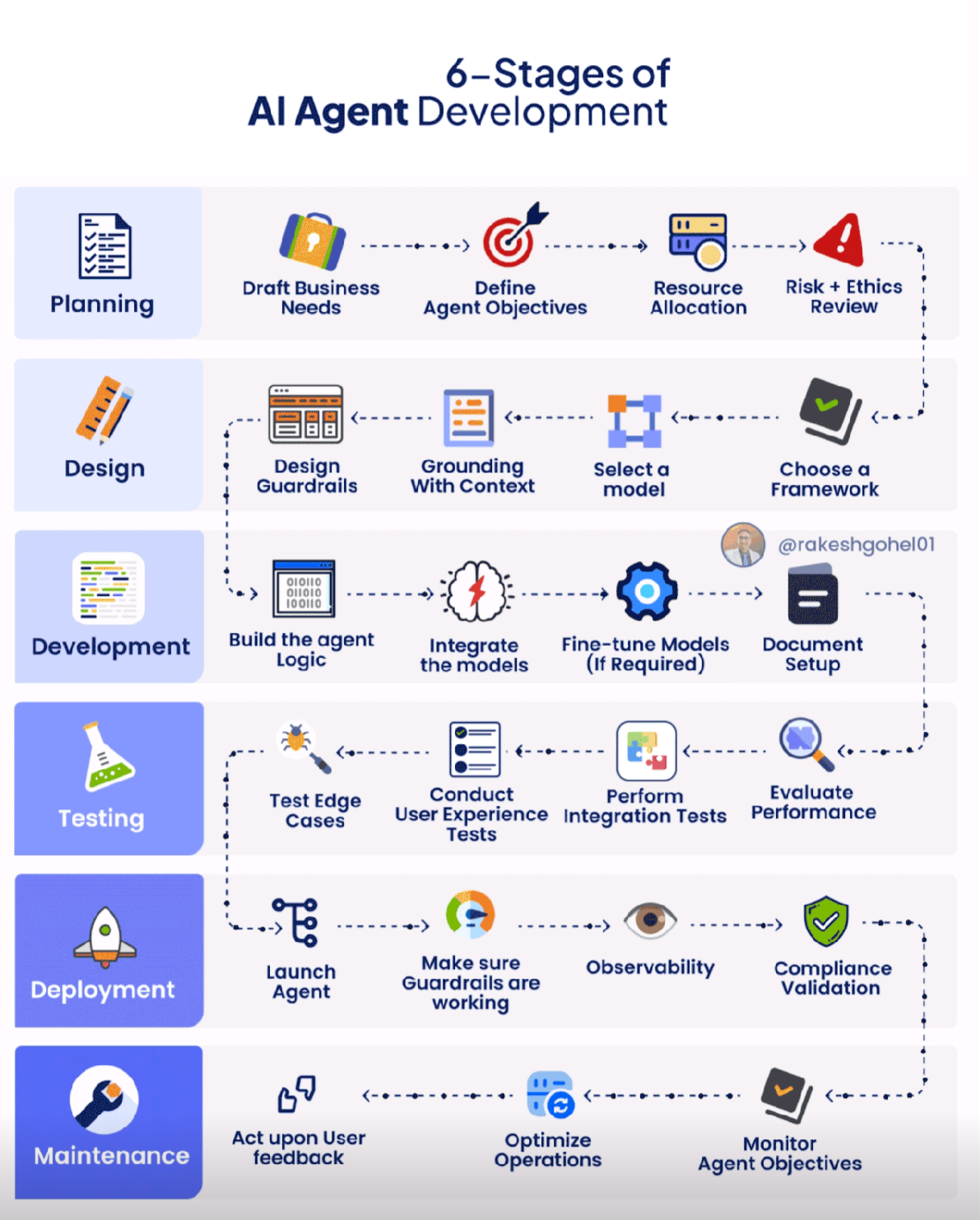

AI Agent Development Workflow

I can provide ad-hoc training courses about AI/ML technologies for companies and educational institutions employee updates.